In 2018, the world of the internet was shaken by the Cambridge Analytica scandal: an event in which the infamous British company was involved in an illegal exchange of data points with Facebook to better tailor their advertising campaigns for the Trump election and the Brexit referendum. The event has been quite complicated to dissect and has resulted in a couple of fines ($1.2 Billion and $0.8 billion) to which a specific GDPR section has put a final statement on data (acquisition, in particular). With this being said, the Cambridge Analytica scandal has been covered from a cybersecurity perspective as well, let’s dissect this properly.

Data Acquisition: The Process

In order to understand why the Cambridge Analytica scandal has become a cybersecurity case study, we must analyse the data acquisition process in today’s industry. As many of you may know, data science is vastly done by using Python-based tools. Python is a programming language which heavily relies on computing and processing numerical variables to, therefore, compress them into files which are readable by a database. Imagine Python as a calculator and, the results which are exported from it, are then used to better understand a particular user’s behaviours. Being a sophisticated programming language, but at the same time very limited to the numerical processing of a particular scenario, Python must be combined to other forms of programming, which are the ones (to reference) that are actually “rendering” those numbers which are being processed, like Javascript, C++ and Java, to list a few.

How Is Data Gathered?

The data acquisition process may have already raised a couple of questions in your head, like “how do I know when and where my data is being acquired?” The answer has slightly changed after GDPR: in fact, before the European legislation mentioned above, data could have been gathered anywhere from cookies to other forms of front-end based contacts. This has been a massive (in capitals, eventually) cybersecurity problem from a users’ perspective: imagine landing on an unsecured site which had a complex Python acquisition process. This could have automatically gathered your favourite web pages, your bookmarks, your passwords (eventually, but this should have been extremely advanced from a programming perspective) and so on. Data gathering has been the first red flag which led many cybersecurity experts asking the question “why is this happening?”.

Data-Flux: A (very much) Cybersecurity Problem

Naturally, what mentioned above (when done massively) has become quite a constant when developing a new cybersecurity tool/software or native application. In cybersecurity, the regulation of big data and its process (acquisition, processing and storing) is known with many terms. The latest one is known as “Data-Flux”. Data Flux is a vast term which basically refers to the mole of data a particular tool/app or a website (eventually) is allowed to gather from a specific user: for example, if a website (after having legally declared it) is acquiring cookies, site’s preferences, heatmaps (eventually) and more, then its Data Flux is dangerously high, a procedure which, in terms of transparency from a cybersecurity perspective, isn’t that great.

What Cambridge Analytica Did?

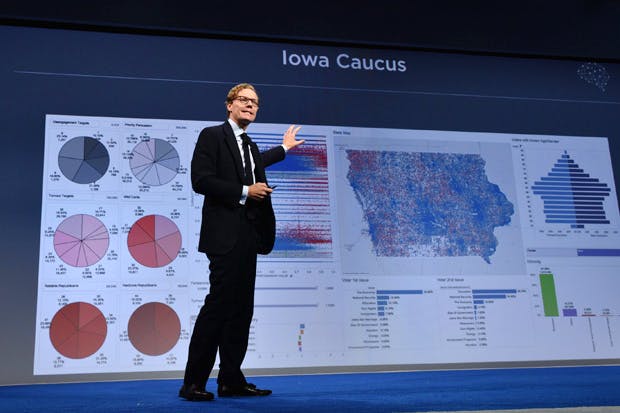

Now that you are aware of Data-Flux and relatively complex data acquisition procedures, let’s go back one second to the Cambridge Analytica scandal. Basically, the guys over at CA bought data points (which are raw, not rendered numerical values associated to a single user) in order to better tailor their marketing ads (on Facebook, mostly). The fact that these were bought from Facebook, leads us to the above-mentioned Data Flux problem: after thorough research, it has been noticed how they were extremely precise, in terms of preferences, geotags and more. This was, in fact, the main thing which led Facebook to the $1.2 Billion: too much data being acquired without regulation has, in fact, been valued for the highest Data-Flux ever. Cambridge Analytica knew this, and they, in fact, decided to approach Zuckerberg’s social network, instead of Twitter or other ones.

Is This A Cybersecurity-Related Case Study?

The evolution of cybersecurity on big data has definitely moved towards a very precise vision: whenever any form of data is acquired, it must be limited to small personal details and must not be detailed/moved towards the end goal of creating precise data points like the ones which Facebook was selling to CA last year. Ultimately, this has already been set into place after GDPR but, given the fact that such legislation only applies to European countries, this still doesn’t apply to an extremely vast surface like the US. The Cambridge Analytica scandal itself can’t be considered a cybersecurity case study per se, but it definitely has stated a couple (maybe dozens) of rules on the matter which will take place and will be applied in the nearest future, worldwide.

From A Developer’s Perspective

When it comes to Python, we must understand that its applications in today’s technology world are still in development. Data science applied to this specific programming language, in fact, has been a matter which emerged in 2016, so it’s fairly new. From a developer’s perspective, we will definitely see a far bigger evolution on the matter in the next couple of years, when all the libraries currently used for data-science (NumPy, for example) will be condensed in a simpler tool (there are plenty of startups, in fact, who are trying to create the CMS-equivalent of WordPress for Python tools). For now, Python is still the most unregulated, exploitable programming language applied to data science, as there are tons of ways for which a savvy data scientist could avoid GDPR regulations.

The Future Of Cyber Secured Data After GDPR

With all this being said, the future of cyber secured data is definitely still related to its regulation. After GDPR it has been stated, in fact, how just 15% of the sites in Europe have properly put those guidelines into place. GDPR has been acknowledged, of course, but it’s still not into place properly. The evolution of cyber secured data will definitely be, in fact, visible after all the guidelines on data acquisition will be set in motion worldwide. For now, even picturing the future of big data security is a hard task.

To Conclude

The Cambridge Analytica scandal may not be strictly considered as a cyber-security matter, in a technical way but it definitely moved a niche matter into the spotlight, in 2018. The buying process which was held by the British company has, in fact, not only generated a vast bigger interest from a commercial perspective towards data science as a whole but has also showcased what and why the entire data acquisition process (as of today) is something which should be completely redesigned and re-imagined.