This new decade brought us many new things. We are starting to think about the nasty things we have done to nature and many are suggesting new ideas. Meanwhile billionaires are also taking part in this trend and proposing their own ideas. Elon Musk rose to the top with his idea of a car that reduces carbon footprints. We of course know the impact of Tesla. Nowadays, everyone is following a similar path, even BMW with their i4 M50 model is starting to take EV business seriously. Although they are bringing few gasoline powered cars like BMW M3. Now, this article is not about electric vehicles. It is about what comes with the electric vehicle trend. Autopilot, or self driving car.

Self driving tech is a technology that enables cars to drive itself using artificial intelligence. This AI can learn from the maps, visual inputs and sensor data to drive itself from point A to B. Not only can it use normal camera feeds, but also thermal imaging and depth sensors to know its surroundings. All these technologies mixed together to make self driving precise and safe. But self driving is not perfect yet, and some experts say it won’t be, ever! Apart from “taking away drivers’ jobs”, there are many issues with self driving tech or autopilot. Let me tell you in detail.

Drivers habit

People who regularly drive gain some abilities that help a driver to guide the car efficiently and safely. When you drive, your attention span increases, you focus harder because it is your duty to take rapid action when things get challenging. You adapt to it and gain a habit. Now, when you give an AI to drive your car, and you do it regularly, you lose your habit and your attention span becomes narrow. Now, your AI takes control of course, but none of the self-driving cars in the market are accurate yet. You still need to take control at some point to prevent accidents and crashes. Turns out, Elon Musk’s Tesla does a stupid thing to skip legal issues that puts you in danger, and if you do not take actions immediately, you can get seriously hurt or even die!

Turns out Tesla autopilot disengages right before a crash. People have died because of it and these cases are something we cannot count as “once in a while mistakes”. The issue here is something that takes more than AI fine tuning and I don’t think Tesla will go for an easy solution. Because allowing autopilot to drive and crash the car will open a legal path that will put Tesla in danger, so they let people take their own responsibility. Turns out self driving tech gives you a false sense of security and ruins your driving habit. No amount of fine tuning will solve this issue, because it has more to it than some lines of code.

Cost of connectivity

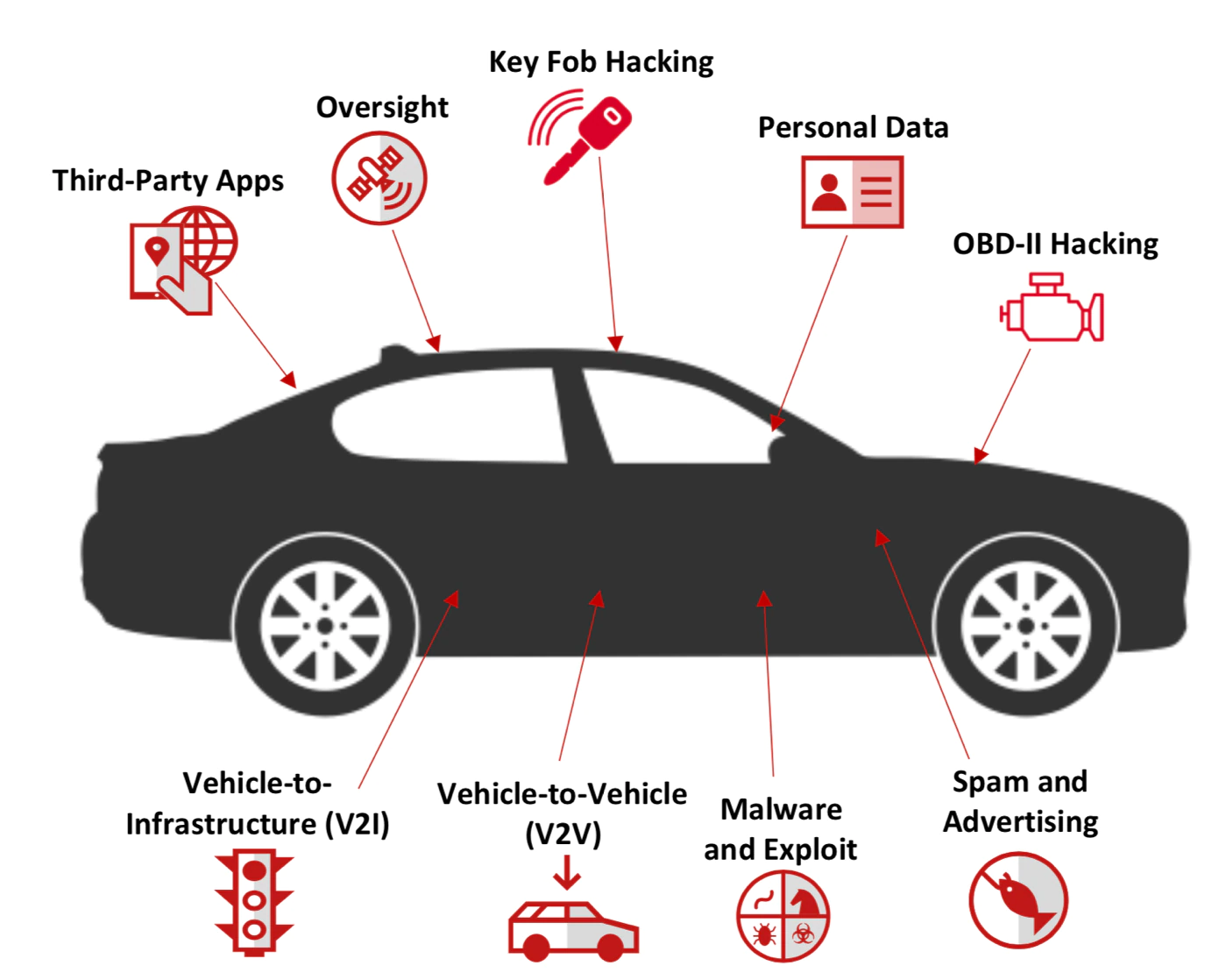

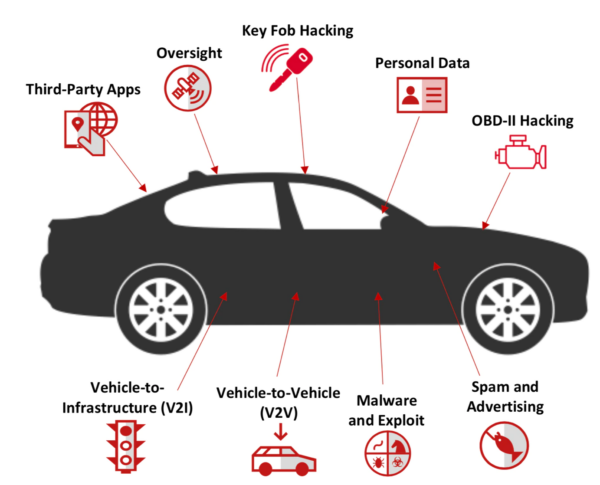

We are living in a world with thousands of ways of connecting to each other. You have your phone that connects you to the internet, that connects you to a platform, and that connects you to people. Not only humans are connecting with each other, but machines too. You can access your printer from anywhere with cloud printing, control your house electronics with your phone from anywhere, get security feed from cameras around your house and more. This is called the internet of things or IoT. Modern cars are also utilizing IoT to give users more comfort. But it is also a big concern. As we know, there is a cost for connectivity. Anything that connects to anything can be intercepted and then bypass any sort of security with hidden vulnerabilities. Recently, someone used a zero day exploit to unlock a Tesla car door. This proves the point.

Now imagine what else can be done with a zero day vulnerability hidden inside autopilot software. Someone with enough skills can rewrite your car’s autopilot configurations to disengage at the right moment to crash your car and kill you. Someone can lock you in or your baby then turn off the AC to suffocate and kill you. These are some common guesses. A more practical guess would be rewriting the autopilot to extract driver’s private information, driving routes and other information then use it against them in many cases. Maybe track them and spy on them. These are happening now with our current tech, so imagine how it will be in the future. Unlike legal issues, this can of course be solved by implementing better security standards and security features.

AI can never replace humans

Although we are afraid of the theory that self-driving cars will make taxi drivers obsolete and take their jobs, I think of something else. An AI has to be trained to drive cars in many conditions to make it fit for any driving scenario. A human on the other hand can adapt to situations very quickly without prior knowledge. In America, roads are pretty predictable and everyone follows the same rule and very few variations can be seen when you drive throughout the American roads. But in other countries, these conditions vary a lot. The same road condition you will see today won’t exist the next day. In countries like Bangladesh and India, you have to rely on your quick reflexes to tackle shifts in road conditions such as puddles in the middle of the road, long broken segments, constructions and lots of other things.

Will a self-driving car steer itself with these conditions in mind? Can a self-driving car take an alternate but unconventional route since there is a trench ahead of the road? Can I rely on it as a passenger who cannot take control of the car in such situations? I do not think these questions are easy to answer. People constantly break laws and overtake, people regularly jaywalk here and run in front of speeding cars. What decisions would a self-driving car take in those narrow and super challenging situations? Can it keep us safe?

Conclusion

Modern technologies are bringing comfort to us. We are working less because modern tech is helping us to do more without even doing anything. Take out your phone to shop online without going anywhere. Now with self driving tech, you can take out your phone and order taxis that are driverless in the future. But you need to know, with each new tech, there comes 100s of issues that are very hard to workaround. We don’t have privacy in 2021, and we are continuously losing it as time passes by. Now, self-driving cars have issues too. And since driving is a sensitive activity, you may think before you give an AI the control of your own car. Thank you so much for reading.